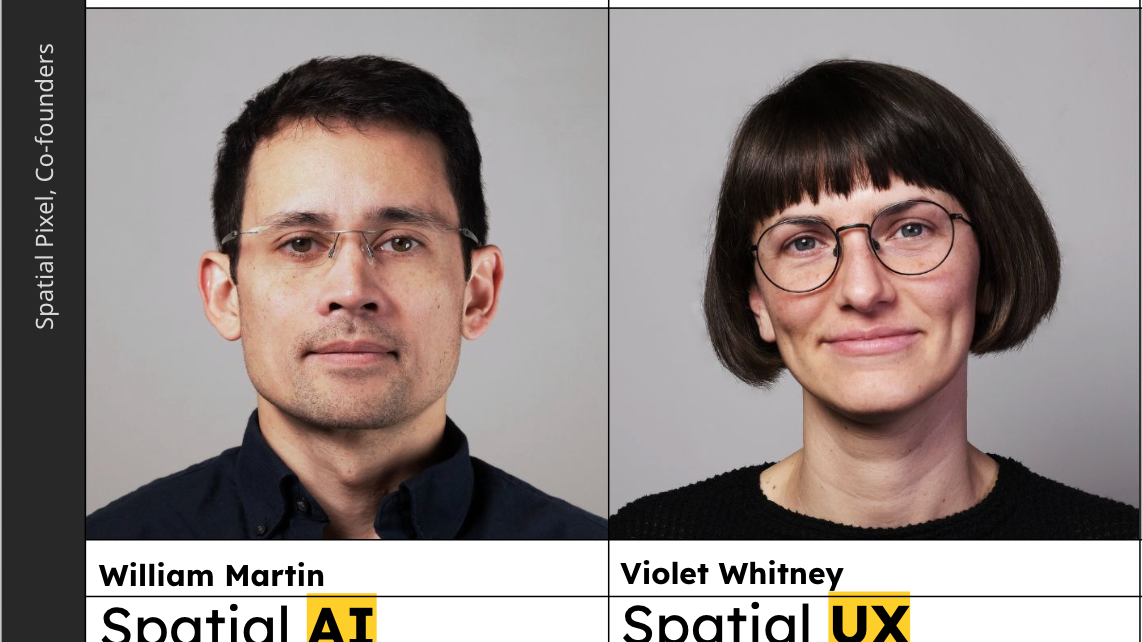

Designing for the Spaces We Share: A Conversation with Violet Whitney and William Martin of Spatial Pixel

With Spatial Pixel, ixD faculty members Violet Whitney and William Martin are building a company grounded in code, AI, and spatial computing.

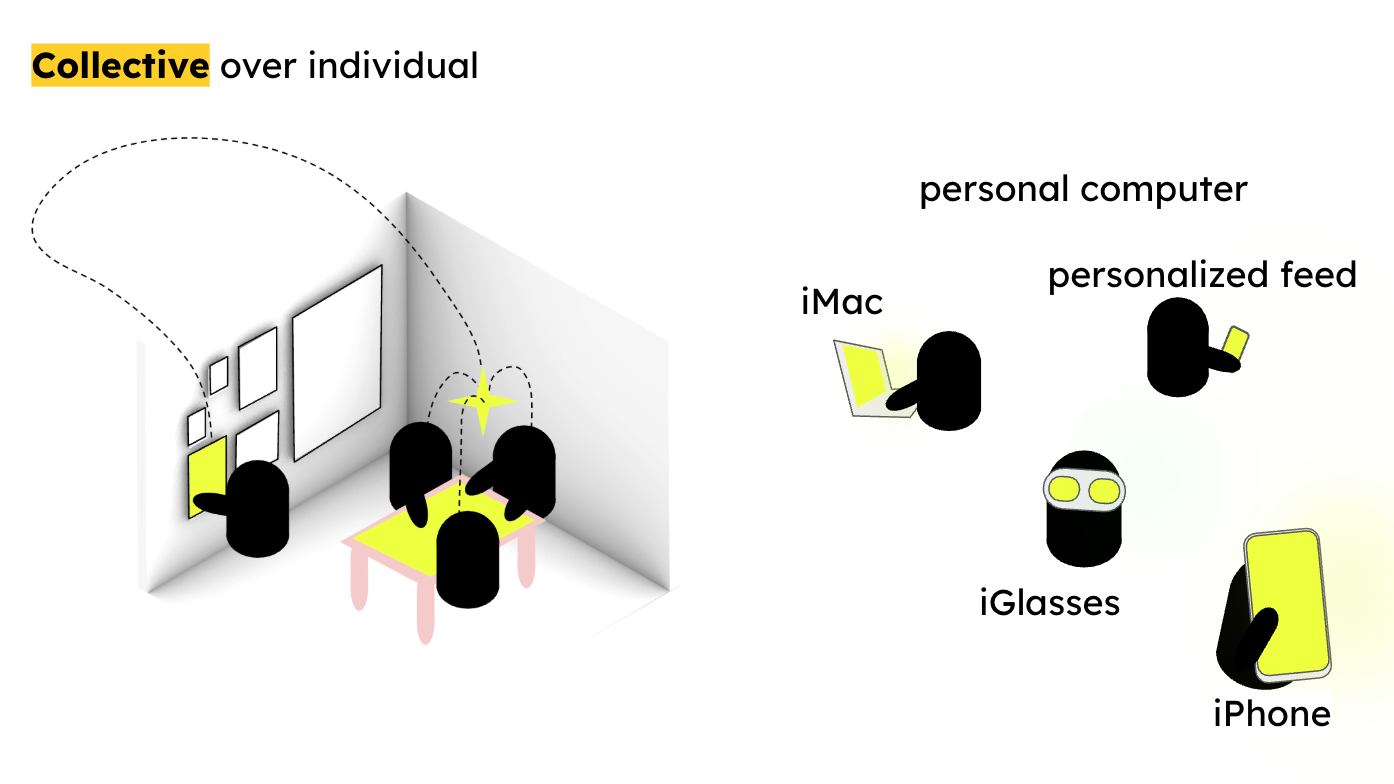

Their goal is not to pull people deeper into their individual screens — it’s to help them step away from them. The idea of engaging users in the real world, rather than isolating them with a VR headset, is encapsulated in the company’s motto: a new kind of spatial computing – one for spaces, not for faces.

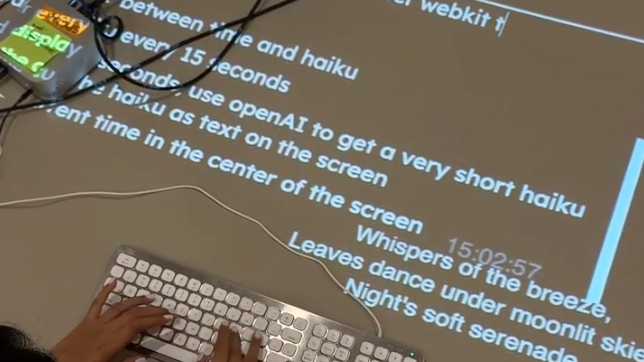

Their core product, Procession, is an open, browser-based platform for programming spatial interactions in plain language. With only a webcam and an API key, users can begin creating gesture-responsive, projection-driven environments with no advanced coding knowledge required.

Spatial Pixel Lab student fellows Sena Park and Naomi Shah (ixD class of 2026) introduce users to the technology in an onboarding video.

With each new project that they embark upon, Whitney and Martin pose a simple yet radical question: what if these technologies felt less like yet another device, and more like a natural extension of your environment?

Through the creation of the Spatial Pixel Lab at ixD, the two co-founders have brought this innovative technology into the SVA community through cross-disciplinary workshops, challenging students to explore its possibilities and to integrate it into their existing design practices. Whitney and Martin also explore this emerging technology more deeply in courses like Spatial Computing and Mixed Realities, taught at ixD.

Spatial Pixel Beginnings: From Architecture to Ambient Computing

William Martin’s background is in architecture, but his deepest roots are in coding. “I have been coding since I was about six years old,” says William. “And when I was in architecture school, I became very interested in using code to design buildings, rather than focusing on the aesthetics of the buildings alone.”

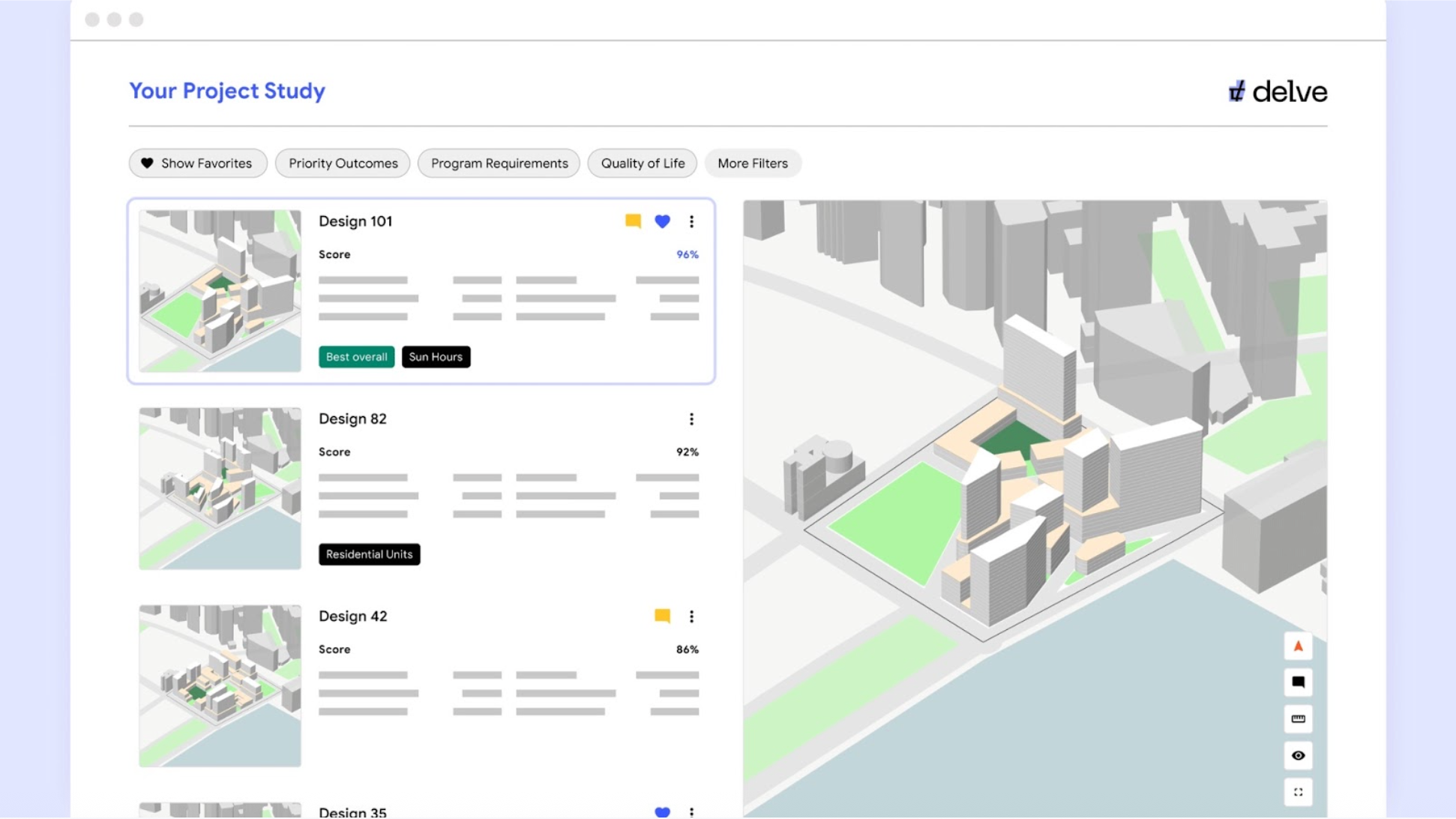

After graduating, he taught structural design at the New York Institute of Technology and design computation at the Yale School of Architecture, worked as head of product at the VR real estate visualization company Floored, and helped to launch Microsoft’s Azure Spring Cloud product. Violet Whitney helped to develop a new master’s program at Columbia University called Computational Design Practices. She also served as Director of Product and Associate Director of Design at Sidewalk Labs, a Google initiative focused on building future cities using machine learning. One of Sidewalk Labs’ projects was delve, a tool that used AI to quickly create thousands of potential designs for urban development projects.

Yet, as Whitney and Martin worked at the forefront of generative design, they felt a misalignment between the work they wanted to do and their day-to-day realities. “So much of my work was spatial, and based in the physical environment,” Whitney reflects, “but I was still spending most of my day looking at a screen.” Back-to-back video calls and digital interfaces began to feel disconnected from the environmental experiences that she most wanted to explore and expand upon.

She started exploring these questions with her students: How do we interact with computers without constantly looking at screens? How do we make spatial computing into a more communal and easily accessible experience? Eventually, after leaving her position at Google, she and Martin began to create a design practice that allowed them to pursue these questions full-time.

Rethinking AI and Spatial Computing

Both Whitney and Martin are skeptical of the dominant narratives around AI. Rather than envisioning AI as a system that writes your emails, shops for you, or “lives your life on your behalf,” they’re interested in something subtler and more human.

“What if,” they suggest, “when I point and say ‘that right there,’ a computer understands what I mean?”

Instead of replacing creative labor, they see AI — particularly language and vision models — as a potential bridge. Spatial computing has traditionally been a very difficult, technical field, often confined to AR/VR ecosystems that require specialized hardware and programming knowledge. But large language models now allow users to express intent conversationally, which can then be translated into code that coordinates cameras, sensors, projectors, and devices.

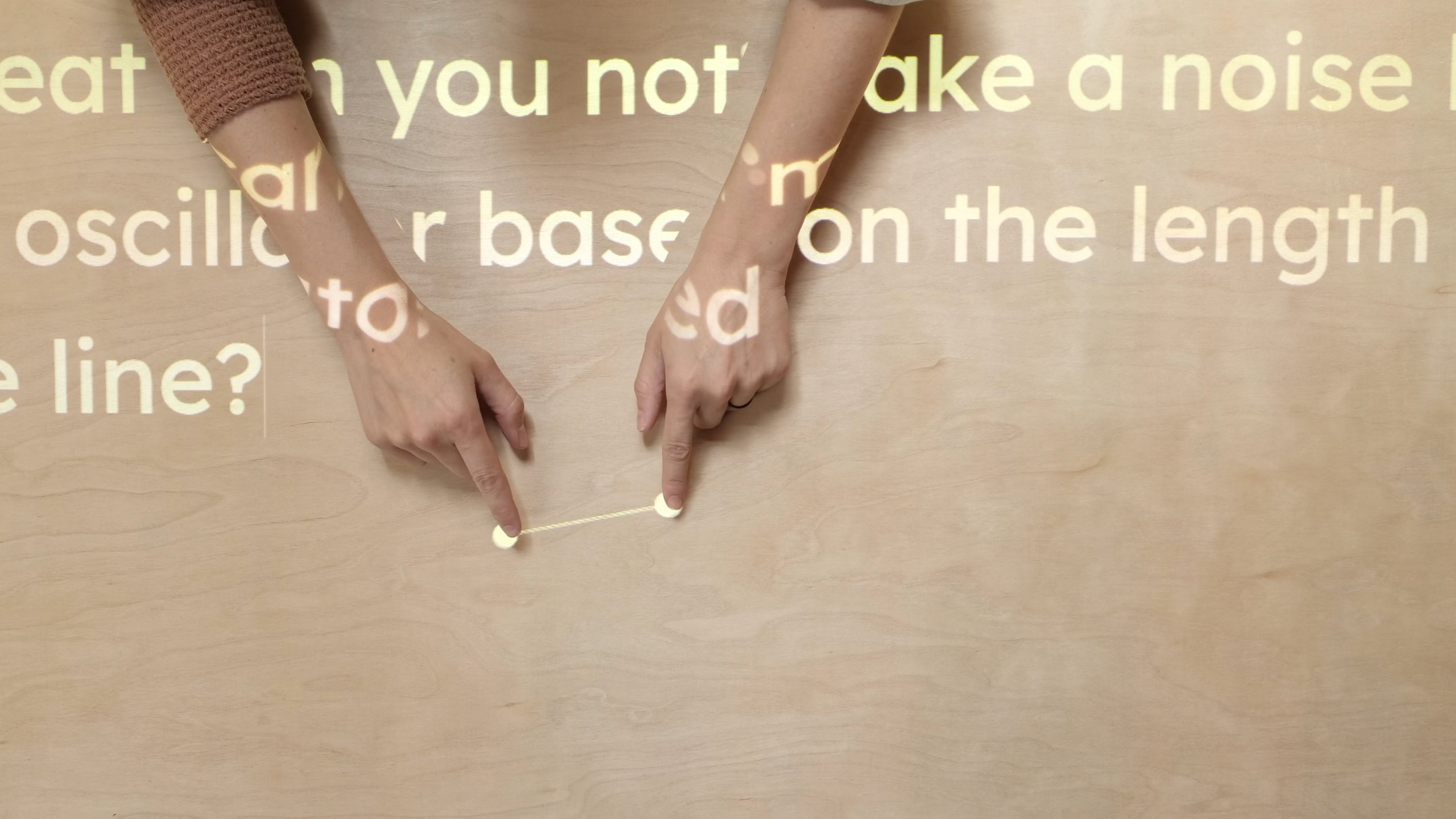

Procession combines language models, computer vision, hand tracking, and object recognition into a unified and intuitive system. People can prompt behaviors — “When my hands come together, do this” — and can iterate quickly, creating new interactions almost instantaneously.

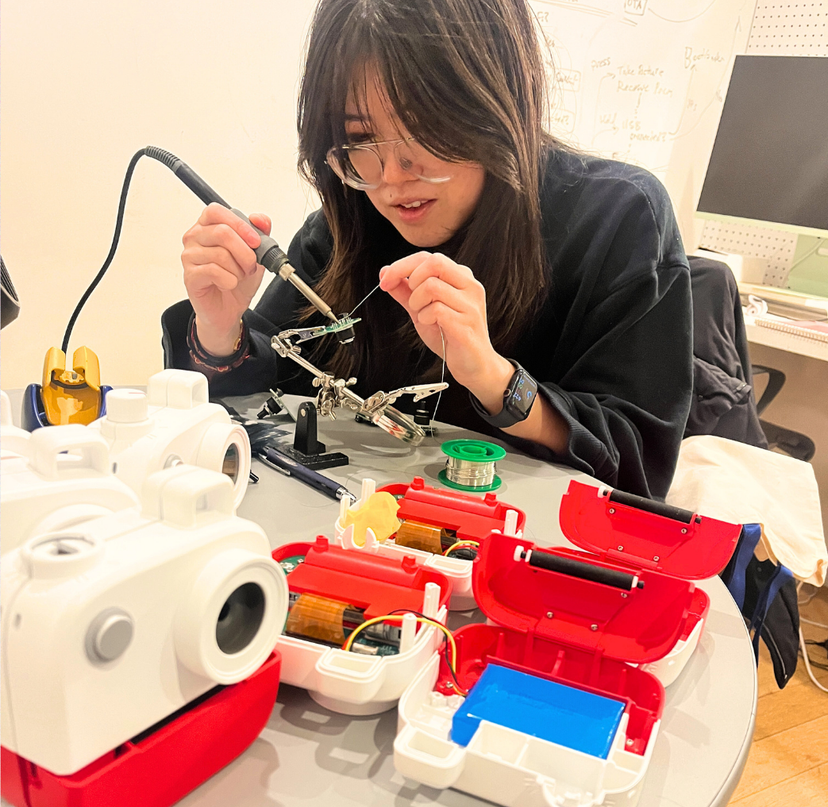

Spatial Pixel Lab student fellows Sena Park and Naomi Shah (ixD class of 2026) building an interaction that quickly translates various languages.

It’s not about “vibe coding” in the trend-driven sense, Whitney and Martin note. It’s about lowering the barrier to entry for people who think spatially but aren’t software engineers.

“Can these types of designers program behaviors in their performance without having to stop and learn a new framework?” is the question they pose.

The Lab as Community Space

Spatial Pixel’s physical lab space at the ixD studio puts into practice the overarching philosophy that is central to the company’s ethos. Rather than centering individualized headsets, the lab focuses on projection-based tabletop interactions — an accessible entry point into tech that can sometimes feel intimidating to new adaptors.

In the lab, students can experiment with camera-projector systems that track hands and objects in real time. One architecture student, working in the archives at the Avery Library, built a system using Procession that allowed him to open a book on a table, use his hands to “crop” an area of interest, and capture an image without ever touching a screen. A shake gesture discarded unwanted images. These interactions became a natural extension of his physical workflow, rather than interrupting it.

The lab is designed as an evolving platform, involving students directly in its growth. Student fellows Sena Park and Naomi Shah helped test, document, and refine the system — acting as both users and translators to make the space more approachable and accessible for their fellow students.

The broader ambition for the space is community-driven growth: bringing in the larger SVA community, and beyond, into the lab to learn and iterate. Beginning in this summer, Whitney and Martin will teach a course open to the public as part of SVA’s Continuing Education program. In addition, in the fall of 2026, a new spatial computing course will be open to BFA students across disciplines, inviting students from various majors to work in the Spatial Pixel Lab. Whitney and Martin point to platforms like Arduino as models: open ecosystems that empower experimentation from the ground up. Their goal is for Procession to become part of that lineage; to create an accessible spatial computing language that creatives can build upon and contribute to.

Looking Towards a Less Screen-Centered Future

There’s an irony that Whitney and Martin readily acknowledge: to move beyond screens, they still have to use them in the meantime. But the long-term vision for this technology is clear: a form of ambient computing that feels organic, coordinating speech, gesture, and physical objects without pulling a user’s attention back into a device. Whitney and Martin are interested in discovering entirely new interactions, rather than mimicking existing interfaces.

With Procession, Spatial Pixel is working towards a future that is computational at its core — but if used successfully, will feel deeply human.